One tool. Zero overhead.

No servers. No databases. No API keys. Just your code — packaged and ready for any AI.

Zero Infrastructure

No servers, no vector databases, no API keys. Run it once, get a file. That’s it. Zero cost, zero setup, zero maintenance.

$0

Infrastructure cost

Optimized for LLMs

Strips repetitive code, adds metadata, and generates token-optimized reports. Split huge codebases into chunks with --chunk-size.

~68%

Token reduction

Live Context with Watch Mode

OS-level file events update your report the moment you save. Your LLM always has the latest code.

Works with Any LLM

ChatGPT, Claude, Gemini, local models. One file, every AI.

Multi-Root Projects

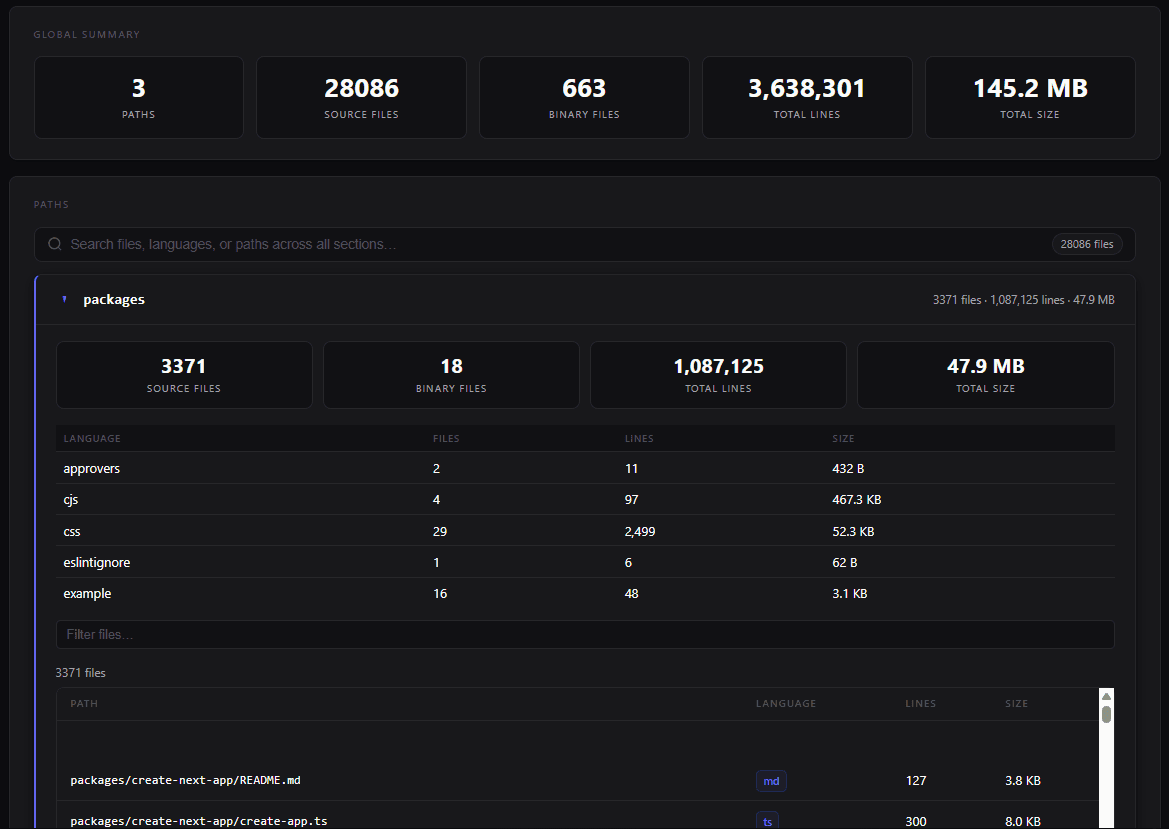

Frontend, backend, docs — scan them all simultaneously. Multiple paths generate individual reports plus a unified combined.html dashboard.

HTML Dashboard

Interactive single-file dashboard with charts, virtual-scroll source viewer, and syntax highlighting. No server required.

Upload to ZagForge

Push your scan result to ZagForge with --upload. Authenticates via ZAGFORGE_API_KEY or ~/.zagforge/credentials.

Built with Zig

Extreme performance for massive codebases. Run zigzag bench for a per-phase timing breakdown.

What happens when you run ZigZag.

0.8s

3,763 files scanned

4 formats, one pass

Why not just copy-paste?

You could. But here's what you'd be missing.

Questions? Answers.

Everything you need to know before getting started.